AI is both weapon and target: Noam Schwartz on the new threat landscape

Noam Schwartz of Alice explains why prompt injection is the new SQL injection and what enterprises deploying AI agents need to know about trust and safety.

Most enterprise security teams have a mental model shaped by traditional web vulnerabilities—SQL injection, XSS, CSRF. That model is already outdated. At HumanX 2026, Michael Grinich sat down with Noam Schwartz, CEO of Alice, to talk about why AI systems require a fundamentally different approach to trust and safety—and why the threat surface is expanding faster than most companies realize.

From content moderation to AI safety

Alice isn't new to this problem. Originally known as ActiveFence, the company spent years working with the largest consumer platforms to detect fraud, spam, impersonation, and abuse at scale. When AI moved from research labs into production, they brought that operational expertise to foundation model labs—helping with robustness testing, red teaming, and hardening models against misuse.

The rebrand to Alice reflects a shift in who needs this help. It's no longer just model labs. Fortune 500 enterprises are now deploying AI models, agents, and applications in front of real users—and they need to do it safely.

"If you have any product in production that can get untrusted data, gets access to personal information, and can call other tools—this is where you call us," Schwartz said.

That description covers a lot of ground. It's basically every AI agent architecture shipping today.

AI As both weapon and target

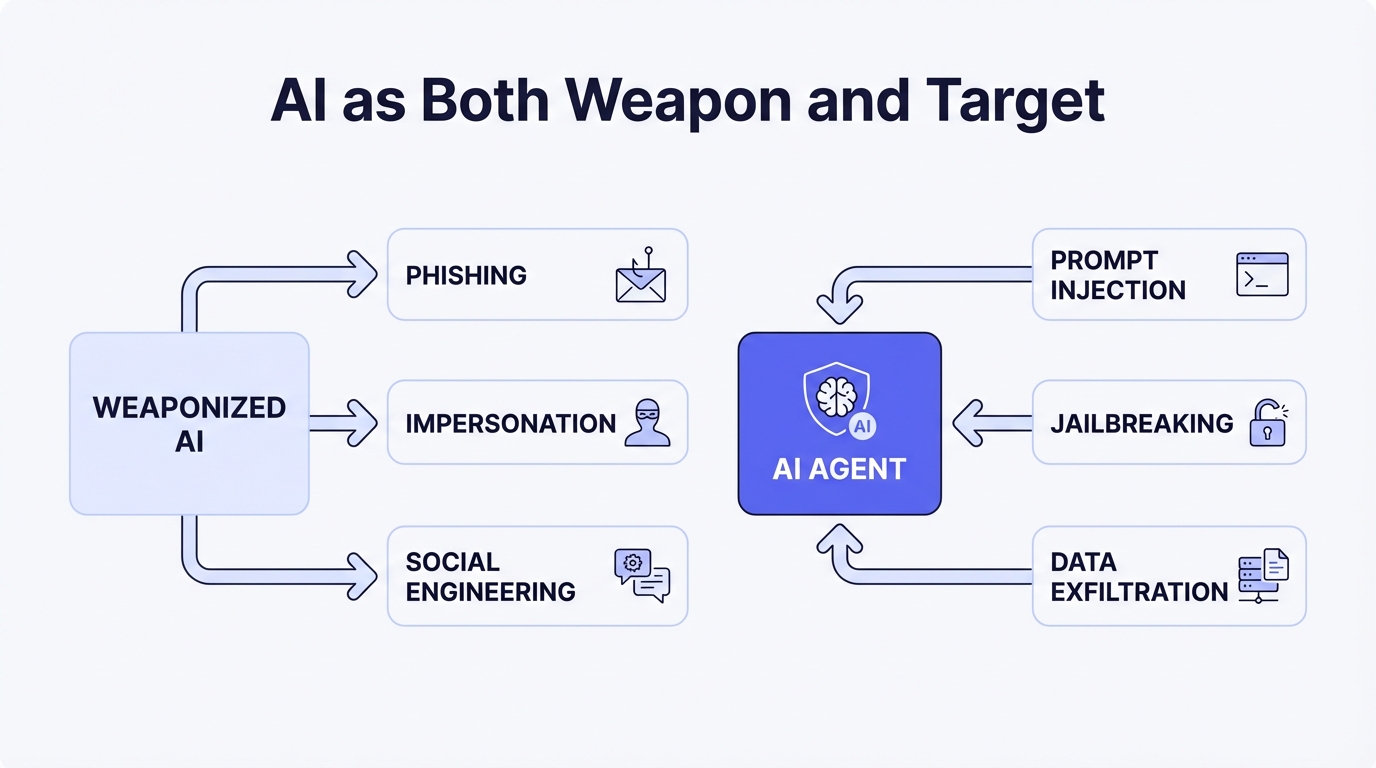

Schwartz framed the core challenge cleanly: AI can be both a weapon and a target.

Bad actors weaponize AI for autonomous cyberattacks, impersonation, and social engineering. Generative models lower the cost and skill required to produce convincing phishing campaigns, fake identities, and manipulated media at scale. Enterprises deploying AI, meanwhile, face a new class of attacks from the other direction—prompt injection, jailbreaking, data exfiltration through tool use—that can compromise their own systems from the inside.

This dual nature is what makes AI security fundamentally harder than traditional application security. The attack surface isn't just code—it includes the model's behavior, the data it can access, and the tools it can invoke, all of which can be manipulated through natural language input alone.

The democratization of attacks

What stood out most in the conversation: you no longer need to be a sophisticated state actor to break state-of-the-art models.

"English is the way you convince the model to do things it's not supposed to do," Schwartz said. "If SQL injection was the old threat, prompt injection is the new one."

That analogy lands hard. SQL injection exploited the gap between data and instructions in database queries. Prompt injection exploits an analogous gap in LLM processing—user input and system instructions are concatenated into the same context window, and the model has no reliable mechanism to distinguish between them. There is no equivalent of parameterized queries for natural language.

The risk compounds as open-source models grow more capable. Within months of a new frontier model release, open-weight alternatives with comparable benchmark performance appear. Those models can have their safety fine-tuning removed through further training or weight manipulation. The barrier to building a capable, unconstrained model is dropping fast.

The threat isn't theoretical. Any enterprise shipping an AI agent that handles untrusted input, accesses sensitive data, or calls external tools is exposed right now.

What's coming: multi-model architectures and a production reckoning

Schwartz made three predictions about where the market is headed.

Foundation model consolidation. Some foundation model companies will be acquired by hyperscalers. The economics of training frontier models—where a single training run can cost hundreds of millions of dollars in compute—favor companies with existing infrastructure at scale. Not every lab survives independently.

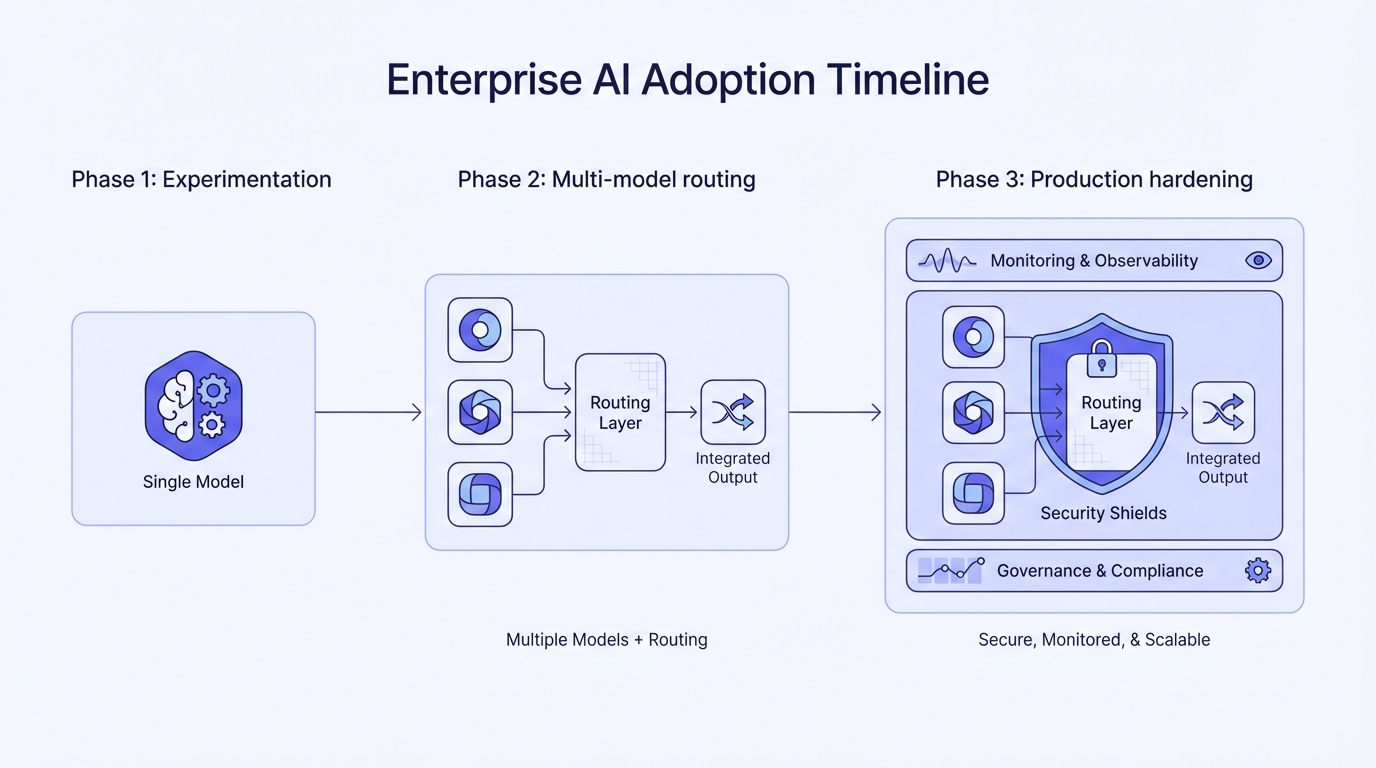

Multi-model architectures become the default. Enterprises will stop committing to a single model provider. Instead, they'll build routing logic that selects models based on task, cost, and risk profile. This is already happening—companies like WorkOS see this firsthand as customers build increasingly complex AI-powered workflows.

A production wake-up call. Early AI tool adopters are going to hit a wall. "A lot of their initial customers are going to say, 'I need this thing to work in production, at scale,'" Schwartz said. "And people are going to take a step back and build things on their own."

The gap between a demo and a production deployment is enormous—especially when security, compliance, and reliability are non-negotiable. Companies that treat AI safety as a day-one requirement rather than a post-launch afterthought are the ones that actually ship.

The new threat model

Prompt injection is the new SQL injection. The attack surface for AI systems is fundamentally different from traditional applications, the barrier to exploitation is lower than ever, and the stakes are higher because these systems have access to real data and real tools.

If you're building AI agents or applications that handle untrusted input, this isn't a problem you can defer. The threat model has already shifted.

This interview was recorded at HumanX 2026 in San Francisco.