From workforce management to AI orchestration: Assembled CEO John Wang on the jevons paradox of customer support

Assembled CEO John Wang explains why AI is growing support teams, not shrinking them, and why orchestration is the real differentiator in customer support.

AI was supposed to shrink customer support teams. Assembled CEO John Wang is seeing the opposite happen—and he has a compelling explanation for why.

At HumanX 2026, WorkOS CEO Michael Grinich sat down with Wang to talk about what happens when you layer AI agents into complex, high-volume support operations. The conversation covered everything from domestic abuse edge cases to a future where AI agents call support on behalf of users.

From scheduling to AI orchestration

Assembled started as a workforce management platform, solving gnarly scheduling problems for support teams with thousands of agents spread across specializations, time zones, and labor laws. When LLMs arrived, the company saw a natural extension: build AI agents for customer support, then build the orchestration layer that decides when humans or AI should handle each interaction.

"The big problem we're trying to solve is how you manage all this complexity now that there are so many AI options," Wang told Grinich. "You want to provide the best experience to your customers—so it's about melding humans and AI together into one platform."

Assembled isn't positioning AI as a replacement for human agents. They're building the routing logic that sits above both.

The jevons paradox of customer support

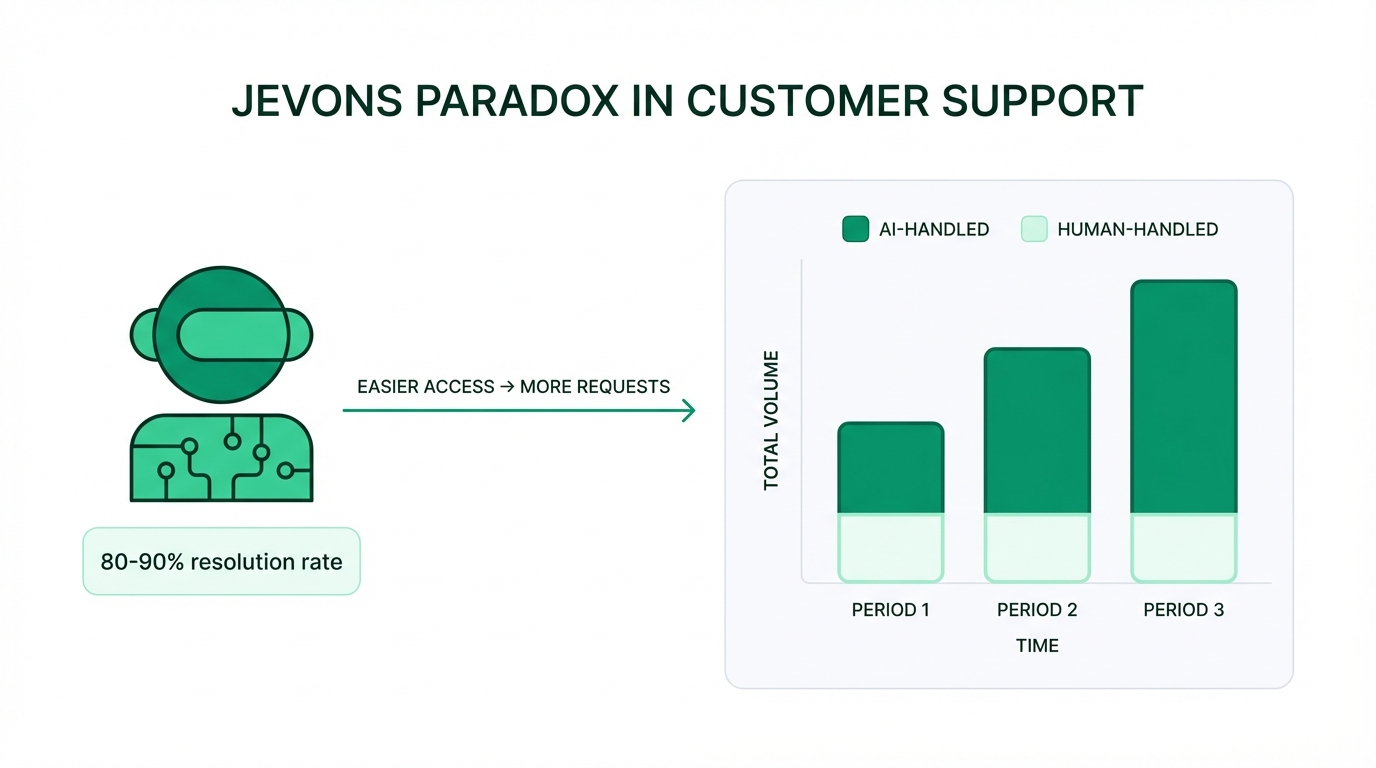

Here's the counterintuitive part: AI isn't shrinking support teams. It's growing them.

"We claim 80–90% resolution rates and that's true," Wang explained. "But the number of times people write in is much, much higher."

When you make it easier to get help, more people seek it. Demand increases to absorb the efficiency gains. This is analogous to the Jevons Paradox—the 19th-century observation that improving the fuel efficiency of coal-burning engines didn't reduce total coal consumption, because cheaper energy drove broader adoption and new uses. In customer support, faster and more accessible AI resolution lowers the barrier to contact, which drives up total inbound volume.

Don't plan for headcount reduction. Plan for volume growth and a shift in the type of work your human agents handle.

Where humans still win

AI handles routine questions well—business hours, order status, simple refunds. But Wang shared a striking example of where it falls short: a customer requesting a return on garments because they were involved in a domestic abuse trial and couldn't process the return on time.

That's not a flowchart problem. That's a situation requiring human empathy, judgment, and the authority to make an exception.

Complex technical questions hit a similar wall. When deep product knowledge intersects with a unique use case, the combination of context and nuance still favors a human. "There's nuance that people are still a little afraid to just let AI run," Wang said.

AI excels at high-frequency, well-defined tasks. Humans excel at low-frequency, high-stakes, ambiguous ones. The orchestration layer's job is knowing the difference.

The art of escalation

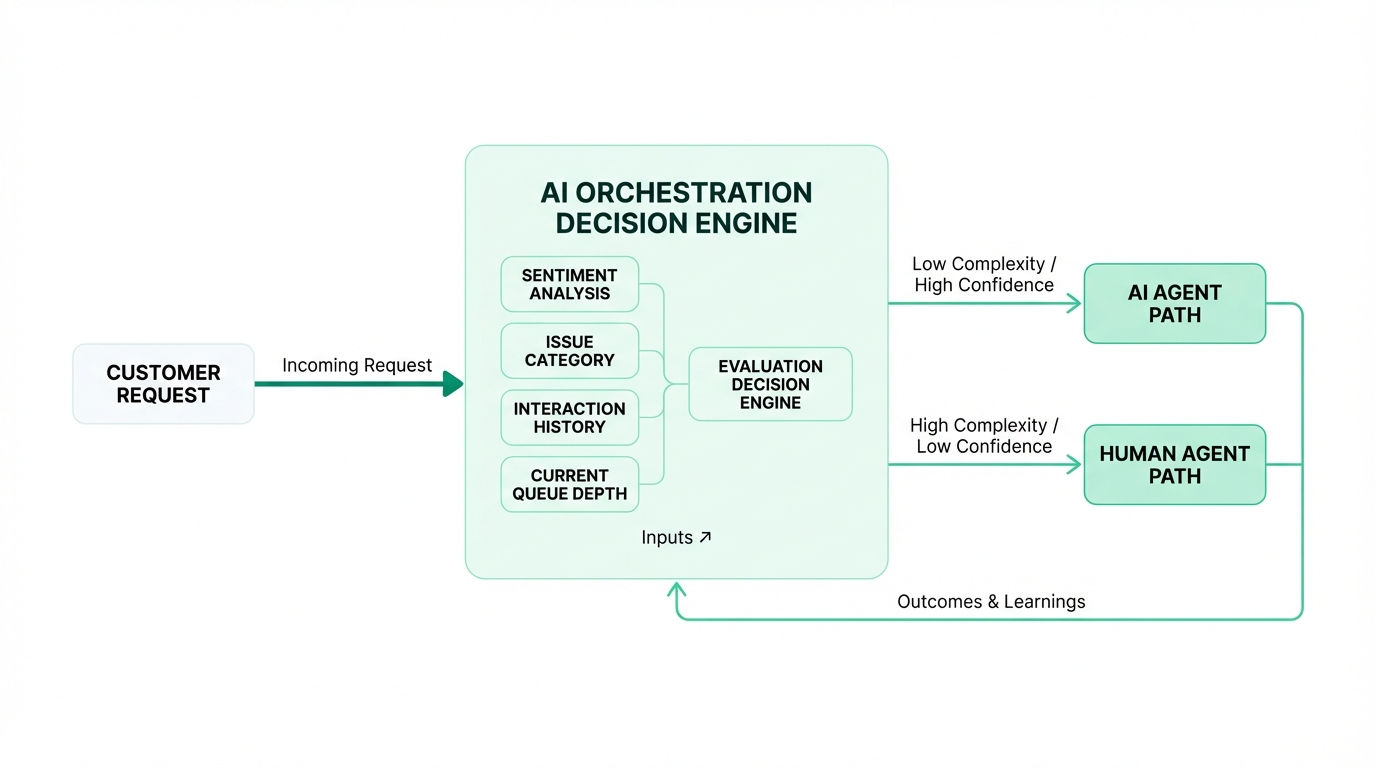

So how does Assembled decide when to hand off to a human? The orchestration engine considers multiple signals:

That last signal is uniquely operational. If a human handoff means a 60-minute wait, the threshold for escalation shifts. The system weighs the cost of keeping the customer with AI against the cost of making them wait for a person. It's not a static ruleset—it's a dynamic calculation that changes with queue depth.

This is where Assembled's workforce management roots pay off. They already modeled capacity planning and real-time workload distribution. Now they're applying that same logic to human-AI routing decisions.

Agents talking to agents

Wang sees a near-future where AI agents contact support on behalf of users. "We've seen interactions where someone's clearly using an AI to ask questions," he said. "I don't think it's that far off for agents to directly interface with support systems."

If an AI agent is representing a user, the support system needs to handle structured, programmatic interactions—not just natural language chat. The interface between customer-side AI and support-side AI starts to look more like an API than a conversation.

As for the broader market, Wang expects the AI agents themselves to become commoditized. The differentiation shifts to orchestration: deciding which agent to use, measuring how different agents perform under different conditions, and making that transparent and controllable for the teams managing it.

The orchestration layer is the product

The hard problem isn't building AI agents. It's managing them. Knowing when to deploy them, when to pull them back, how to blend them with human teams, and how to do all of that dynamically as conditions change.

The agent is table stakes. The orchestration is the moat.

This interview was recorded at HumanX 2026 in San Francisco.