MCP-UI: Breaking the Text Wall in AI Interactions

Liad Yosef and Ido Solomon from Monday.com demonstrate MCP-UI at MCP Night 2.0, showcasing how their framework brings rich, interactive user interfaces directly into AI agent conversations.

On August 7, 2025, MCP Night 2.0 brought together 700 engineers, founders, and researchers at the Regency Ballroom in San Francisco for an evening of demos showcasing how the Model Context Protocol ecosystem is maturing.

The event featured presentations from leading companies including Anthropic, OpenAI, Monday.com, Notion, and Block, highlighting both the opportunities and enterprise challenges in connecting AI systems to real-world tools and data. Read the full recap of MCP Night 2.0 here.

MCP-UI: Moving Beyond the Text Wall

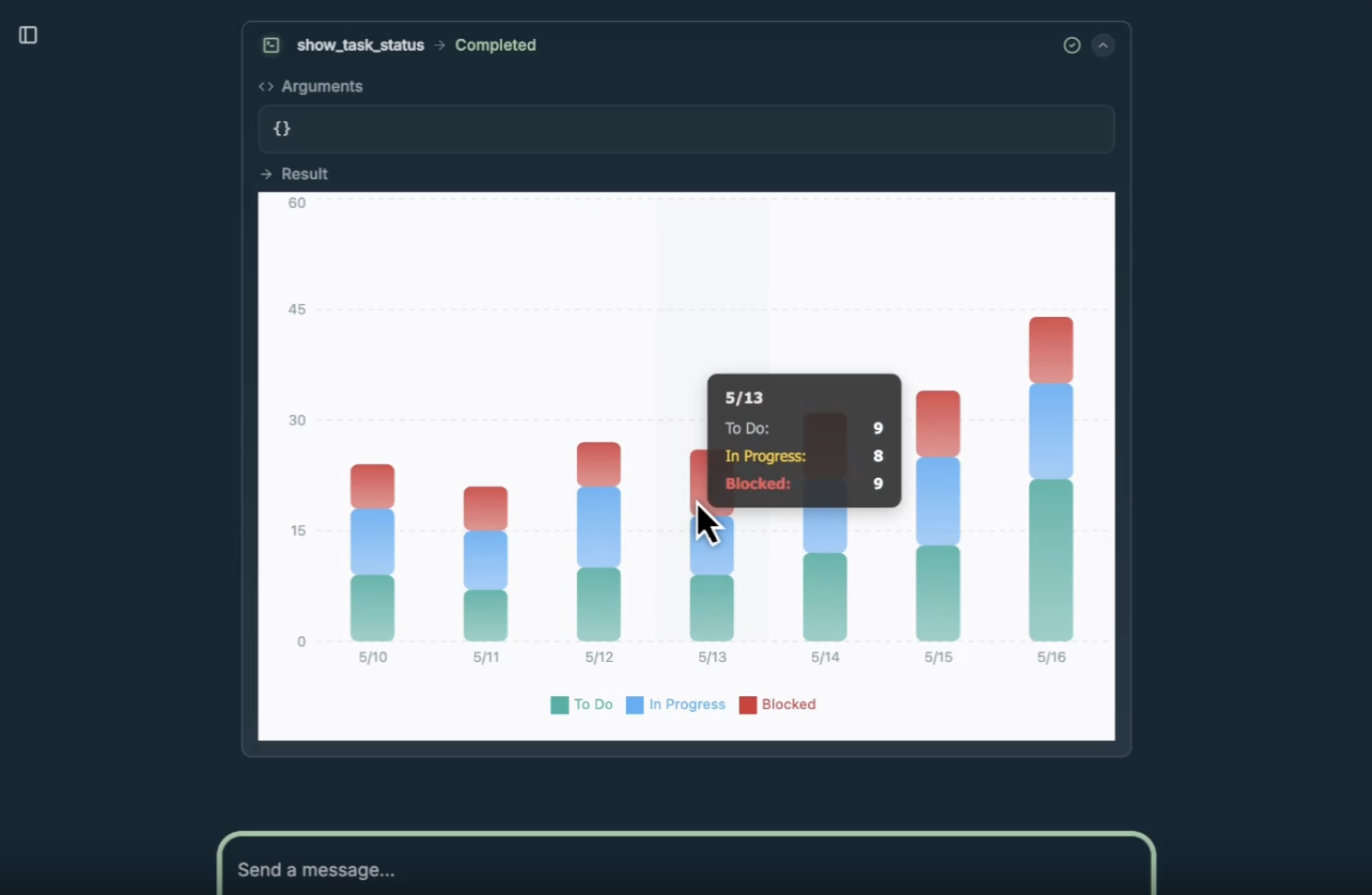

We've all experienced it—asking an AI agent for help and receiving walls of text that still require manual work to be useful.

Liad Yosef and Ido Solomon, both AI Leads at Monday.com and creators of the wildly popular GitMCP project, presented MCP-UI—a framework that brings rich, interactive user interfaces directly into AI agent conversations.

Beyond Text-Only Interactions

MCP-UI addresses a fundamental limitation in current AI interactions. While text-based interfaces work fine for early adopters and technical users, they create friction for complex workflows.

Imagine trying to book a flight, choose a hotel room, or configure a product entirely through text descriptions. It's cumbersome at best and often impossible to convey the full richness of the experience.

The demo emerged as a crowd favorite at MCP Night 2.0.

How MCP-UI Works

The framework enables MCP servers to construct and return interactive UI components as MCP Resources, which MCP clients can then choose to render. This creates a standardized way for applications to maintain their visual identity and user experience within AI conversations.

MCP-UI supports three delivery methods:

- inline HTML embedded via srcDoc in sandboxed iframes

- remote resources loaded in sandboxed iframes

- Remote DOM for direct client-side rendering

On the server side, developers can create interactive resources using simple APIs, while the client side uses a single UIResourceRenderer component to handle display and interaction logic.

The security model ensures all remote code executes in sandboxed iframes, maintaining both host and user security while preserving rich interactivity.

Real-World Commerce Applications

The technology isn't just conceptual—it's already seeing significant adoption. Shopify has integrated MCP-UI support across their platform, providing merchants with interactive experiences that go far beyond traditional text-only AI interactions.

As their engineering blog explains, commerce UI is deceptively complex: "Take a product selector. The basic version seems simple—show an image, price, and 'Add to Cart' button. But real commerce quickly introduces complications: variants with dependent options, bundle builders with complex pricing rules, subscription options with frequency selectors, and inventory constraints that update in real-time."

Their MCP-UI implementation handles these complexities through an intent-based messaging system. When a user clicks "Add to Cart" inside an embedded component, it doesn't directly modify state—instead, it bubbles up intents that the agent interprets, preserving agent control while enabling rich interactions.

Components can expose events like view_details, checkout, notify, and ui-size-change that agents can mediate throughout the purchase journey.

The Future of Agentic Interfaces

MCP-UI represents more than just a technical improvement—it's part of a broader shift in how we think about AI interactions. The presentation suggested new possibilities for voice and screen-based interfaces that move beyond traditional chat-based interactions.

Future versions might move beyond static HTML to AI-generated interfaces tailored to individual users' needs, preferences, and accessibility requirements.

The protocol isn't limited to visual interfaces either; it could extend to voice interactions, mobile native components, or entirely new interaction paradigms we haven't yet imagined.

As Block's team observed, "Instead of every company building separate integrations for each AI platform, MCP-UI creates a standard that works everywhere."

The implications extend far beyond prettier interfaces. We're looking at a future where AI agents don't just describe experiences—they deliver them directly.

As the evening's discussions revealed, this represents one of the key themes emerging from the MCP ecosystem: the most successful implementations don't simply wrap existing APIs, but thoughtfully design interfaces that reflect how people actually work and think about their tasks.

The technology is ready, but as both the Shopify and Block teams emphasize, adoption is the next frontier. Major players are already on board, with Shopify providing MCP support for all their stores and comprehensive tooling becoming available across the ecosystem.

As Liad and Ido demonstrated at MCP Night 2.0, we're moving toward a future where AI agents don't just describe experiences—they deliver them directly.

The text wall is finally coming down.