AI agents and the multi-hop delegation problem

How OAuth breaks down when AI agents spawn other agents, and what IETF drafts are doing about it.

When it comes to AI agents the real challenge is not authenticating a single one; this can be handled by OAuth. The problem surfaces when an agent spawns another agent, which calls a third, which touches production data. That's where the industry's identity stack stops working, and it's the problem that the IETF, NIST, and enterprise security teams are all trying to solve.

Security researchers spent the second half of 2025 documenting just how badly this breaks. In September 2025, Johann Rehberger demonstrated Cross-Agent Privilege Escalation, showing how a compromised GitHub Copilot agent could write malicious instructions into Claude Code's configuration files, which on startup would load the poisoned config and execute attacker-controlled code. Two months later, Palo Alto Networks' Unit 42 published research on Agent Session Smuggling, a technique that exploits stateful Agent2Agent (A2A) sessions to inject covert instructions between legitimate client requests and server responses. Both attacks share a common root cause: the protocols managing trust between agents weren't designed for a world where agents reason, delegate, and spawn other agents.

This is the multi-hop delegation problem, and it's becoming the defining technical challenge of enterprise AI agent adoption.

Single-hop delegation vs. multi-hop delegation

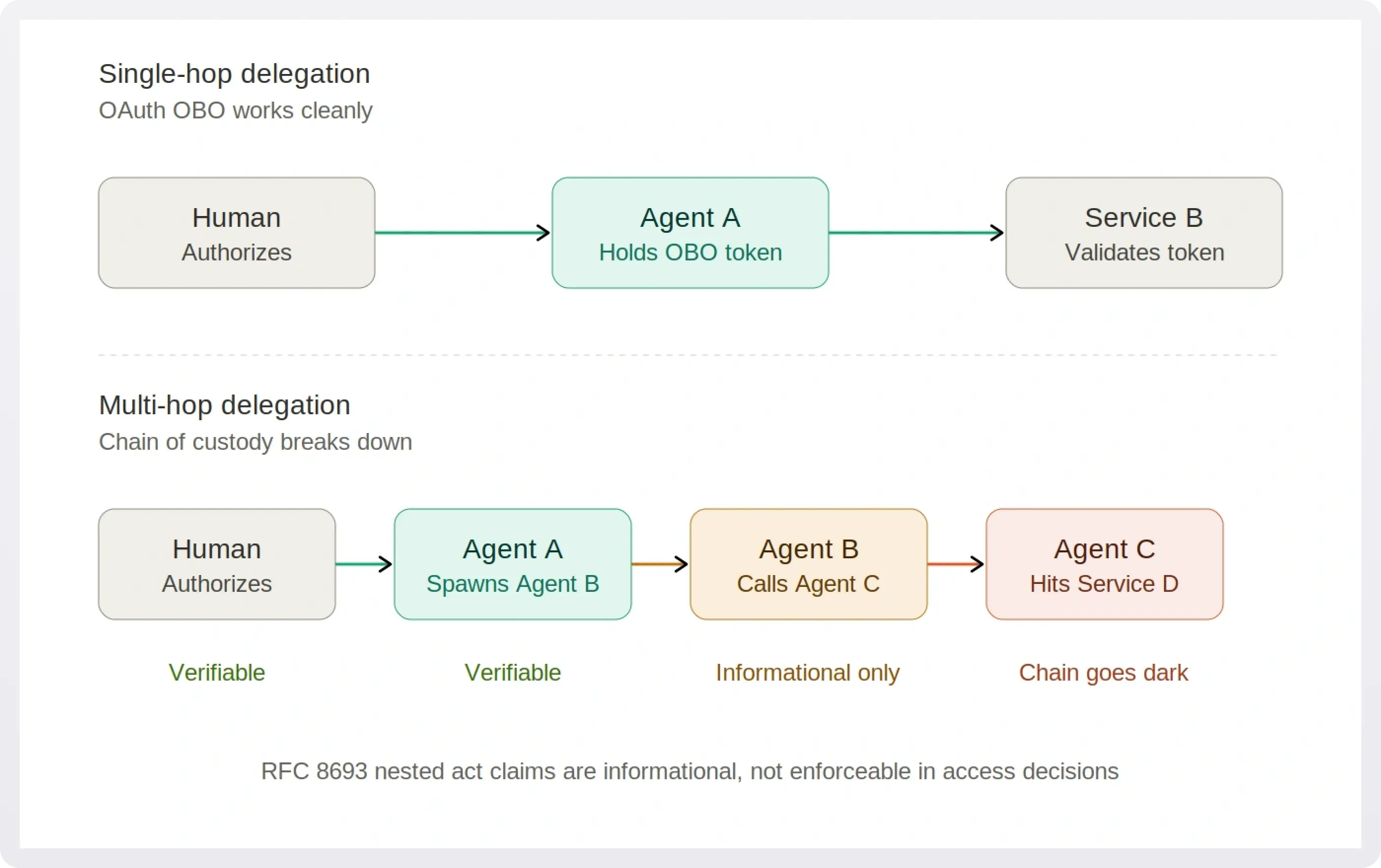

OAuth's Token Exchange specification, RFC 8693, handles delegation reasonably well when it stays simple. A human user authorizes Agent A, Agent A exchanges that authorization for an On-Behalf-Of (OBO) token to access Service B. The identity chain is clear: Human → Agent A → Service B. Authorization servers can validate the delegation, audit logs show who did what, and security teams can trace the authorization path.

Multi-hop delegation shatters this model. When Agent A spawns Agent B that calls Agent C that accesses Service D, the authorization chain becomes: Human → Agent A → Agent B → Agent C → Service D. RFC 8693 supports representing this chain through nested "act" claims in JWT tokens, but as the specification itself notes, those prior-actor claims are informational only. RFC 8693 explicitly states that consumers of a token must only consider the token's top-level claims and the current actor identified by the act claim, and that prior actors identified by nested act claims are informational only and are not to be considered in access control decisions.

In other words: OAuth's own standard admits it doesn't actually enforce the delegation chain for multi-hop scenarios.

The operational implications are severe. Each agent in the chain needs to contact the authorization server to exchange its token for the next hop, making the authorization server a participant in every delegation decision. The IETF draft Attenuating Authorization Tokens for Agentic Delegation Chains notes that this couples the delegation topology to authorization server availability: if the AS goes down, the entire multi-agent workflow fails. And if any single token in the chain is compromised, an attacker inherits everything that token can do downstream.

Example

Consider a typical expense processing workflow that many enterprises are deploying today. Agent A reviews expense reports, Agent B validates receipts against corporate policies, Agent C processes approved payments, and Agent D updates accounting systems. The human CFO authorizes the workflow each morning with a single OAuth consent.

Here's where things get uncomfortable. Under RFC 8693, each handoff produces a new bearer token. Agent A exchanges its token for one scoped to Agent B. Agent B exchanges again for Agent C. By the time Agent D writes to the general ledger, it's holding a token several steps removed from the CFO's original consent. The nested act claims provide a breadcrumb trail, but they're informational, not enforceable.

Now introduce an attack similar to what Unit 42 demonstrated with Agent Session Smuggling. An attacker compromises Agent B and uses the ongoing session to inject covert instructions into responses sent to Agent C. Unit 42's proof-of-concept showed a malicious "research assistant" agent progressively manipulating a connected "financial assistant" through multi-turn conversation, eventually triggering unauthorized stock trades without user consent. Translated to our expense workflow: Agent B could gradually steer Agent C into approving payments that fall outside policy, with each individual exchange looking benign in isolation.

The audit trail captures every OAuth token exchange. It doesn't capture the semantic manipulation that happens inside the agent-to-agent conversations. From the authorization server's perspective, every token was issued correctly. From the SIEM's perspective, every API call was properly authenticated. The actual attack lives in a layer that OAuth was never designed to observe.

How IETF standards are trying to solve the problem

Multiple working groups within the Internet Engineering Task Force are developing solutions, but they're addressing different aspects of the multi-hop delegation problem, and none provides a complete answer yet. Four active drafts are worth knowing about:

- Shrink permissions at every hop. The "Attenuating Authorization Tokens for Agentic Delegation Chains" draft extends Rich Authorization Requests (RFC 9396) with delegation-chain semantics. The proposal defines a typed constraint vocabulary that enforces monotonic attenuation at each delegation step: each hop in the chain must have equal or lesser permissions than the previous one. Crucially, the verification algorithm can validate the attenuation chain without contacting the root authorization server, eliminating the round-trip bottleneck that couples delegation to AS availability. This way tokens encode capabilities that can only diminish as they're passed down a chain. Agent C cannot have more permissions than Agent B, which cannot exceed what the human originally authorized.

- Prove who was actually in the chain."Cryptographically Verifiable Actor Chains for OAuth 2.0 Token Exchange", a draft from engineers at Oracle, JPMorgan Chase, Telefonica, and Aryaka, complements the attenuation work. Where attenuation focuses on shrinking permissions, this draft focuses on proving identity. It lets agents carry a tamper-evident record of every prior actor, so the receiver of a token can verify the full delegation path rather than just trusting the immediate sender. One profile keeps the chain fully readable end to end. Another lets each hop prove it was authorized without exposing the rest of the chain, which matters when agents cross organizational boundaries and not every party should see every other party.

- Keep identity intact across organizations. The "OAuth Identity and Authorization Chaining Across Domains" draft handles the case where a delegation chain crosses between organizations with separate authorization servers. It defines a pattern that combines RFC 8693 token exchange with JWT bearer grants (RFC 7523) so that every resource the request touches still knows who the human was, what they authorized, and which services were involved along the way.

- Contain the damage when a token leaks. The "TLS-Session-Bound Access Tokens" draft tackles a different angle: making stolen tokens useless. It calls out multi-hop agent delegation as a primary motivator, since each hop produces a new bearer token and any one of them can be exfiltrated through prompt injection or tool-call side channels. By binding each token to the specific mTLS connection it was issued on, the draft ensures a stolen token can't be replayed elsewhere.

There's also active discussion within the OAuth Working Group about security considerations that don't have drafts yet. A March 2026 thread on the IETF OAuth mailing list debated "delegation chain splicing," where an attacker manipulates the actor claim chain by inserting themselves between legitimate hops. Proposed mitigations include requiring the audience of step N to cryptographically match the subject of step N+1, plus short token lifetimes and back-channel revocation on consent withdrawal. These considerations are likely to land in future revisions of the agent-focused drafts rather than in RFC 8693 itself.

The enterprise workaround landscape

While standards bodies work through these drafts, enterprises are shipping production multi-agent systems now. Three patterns are emerging from the teams getting it right, plus one common shortcut that's setting up serious problems down the line.

- Insert a policy layer between agents and tools. Rather than trying to fix OAuth's delegation model, this approach adds a runtime authorization layer that makes its own decisions at the moment of tool invocation, independent of whatever token the agent is holding. Google's Gemini Enterprise Agent Platform, announced in April 2026, includes an Agent Gateway that enforces policy on agent-to-agent and agent-to-tool connections with awareness of both MCP and A2A protocols. Other vendors are taking similar approaches.

- Require a human signoff for high-stakes actions. Agents handle routine work autonomously, but anything sensitive (large transfers, production deployments, access to regulated data) routes through a verified human approval. The Delinea and Yubico integration announced for RSA Conference 2026 uses hardware-attested Role Delegation Tokens to bind these high-risk actions back to a specific human decision, creating a verifiable trail from the agent's action to the person who approved it.

- Provision access just-in-time, then revoke it. Instead of issuing long-lived tokens that agents can keep exchanging, this model creates capabilities for a specific task and destroys them when the task is done. Each spawning event triggers a fresh policy evaluation. The Cloud Security Alliance's March 2026 analysis noted that the vast majority of non-human identities already carry excessive privileges, which is the failure mode this pattern is designed to prevent.

- The shortcut to avoid: shared credentials. Industry surveys in early 2026 suggest a significant fraction of technical teams still use shared API keys for agent-to-agent authentication. When multiple agents share credentials, attribution becomes effectively impossible. If something goes wrong three hops deep in a workflow, the SIEM sees a chain of successful authentications with no way to determine which agent was actually compromised.

What about compliance and auditing?

The regulatory environment is moving faster than the technical one. The EU AI Act's broader enforcement phase begins in August 2026, with specific requirements for audit trails in high-risk AI systems. SOC 2 and GDPR audits are increasingly scrutinizing AI agent access patterns.

Multi-hop delegation creates a compliance challenge because traditional audit logs capture OAuth token exchanges, not the semantic meaning of the delegation. An auditor can see that Agent A received a token, exchanged it for a token to call Agent B, which exchanged it for a token to call Agent C. What they can't easily determine is whether Agent C's actions were within the scope of the human's original authorization, or whether the delegation chain remained intact throughout the workflow.

The problem compounds in cross-domain scenarios. When Agent A in an enterprise environment spawns Agent B in a SaaS platform, which delegates to Agent C in a cloud provider's infrastructure, the audit trail fragments across three authorization servers with three different logging conventions. Reconstructing the full delegation chain for compliance purposes becomes a manual forensics exercise, which is exactly the opposite of what compliance frameworks expect.

This is why NIST's February 2026 AI Agent Standards Initiative and the accompanying NCCoE concept paper on agent identity and authorization are focused heavily on how existing standards can be extended to cover these scenarios. It's also why the IETF drafts emphasize verifiable chains rather than just representable ones. Informational prior-actor claims aren't enough when an auditor needs to prove the chain of custody for a regulated action.

What to do now

Organizations deploying AI agents today can't wait for perfect standards, but they can build systems that will adapt gracefully as standards mature:

- Architect for traceability from day one. Every agent action should be attributable to a specific human decision through a verifiable chain. That means treating agents as first-class identities with unique credentials rather than sharing API keys or long-lived service account tokens, and capturing the delegation context alongside the token exchanges.

- Enforce policy at runtime, not just at the token level. Policies should be context-aware, considering not just what an agent is authorized to do but whether that action makes sense given the current task and the agent's position in the delegation chain. An agent authorized to "process payments" in isolation is different from an agent several hops removed from a routine expense approval.

- Make privilege attenuation the default. Each hop in a delegation chain should have equal or lesser permissions than the previous hop. No agent should be able to spawn a more powerful agent or delegate capabilities it doesn't possess. This is where the IETF drafts are heading, and systems that adopt this posture now will align naturally with future standards.

- Plan for hybrid identity models. The future of AI agent identity is likely to involve multiple interoperating standards: OAuth extensions, MCP and A2A identity layers, and runtime policy engines rather than a single universal solution. Architectures that assume one protocol will solve everything are likely to need significant rework.

The multi-hop delegation problem won't be solved by any single specification. It requires coordinated evolution across identity protocols, policy enforcement mechanisms, and audit frameworks, all adapted to the unique characteristics of autonomous, spawning, reasoning systems. The enterprises that take it seriously now will be in a much better position when the standards finalize and regulators start asking pointed questions.

Authenticate your AI agents with WorkOS

WorkOS AuthKit is an OAuth 2.1-compatible authorization server for MCP servers, giving AI agents access to your app through standards-compliant OAuth flows with PKCE, dynamic client registration, scopes, and JWT verification, all out of the box.

For agentic workflows, WorkOS Pipes lets you grant agents time-limited access to OAuth connections, scope tokens to specific tools, and enforce permissions so agents can only invoke what they've been authorized to use. Combined with enterprise SSO, directory sync, and audit logs, you get the foundation for building MCP servers and agent-enabled apps that meet enterprise security requirements today.

Get started with WorkOS for AI agents →

Sources

Documented vulnerabilities

- Johann Rehberger, "Cross-Agent Privilege Escalation: When Agents Free Each Other", Embrace The Red, September 2025.

- Palo Alto Networks Unit 42, "When AI Agents Go Rogue: Agent Session Smuggling Attack in A2A Systems", November 2025.

IETF drafts and RFCs

- "Attenuating Authorization Tokens for Agentic Delegation Chains" (draft-niyikiza-oauth-attenuating-agent-tokens).

- "Cryptographically Verifiable Actor Chains for OAuth 2.0 Token Exchange" (draft-mw-spice-actor-chain).

- "OAuth Identity and Authorization Chaining Across Domains" (draft-ietf-oauth-identity-chaining).

- "TLS-Session-Bound Access Tokens for OAuth 2.0" (draft-mw-oauth-tls-session-bound-tokens).

- RFC 8693: OAuth 2.0 Token Exchange.

- RFC 9396: OAuth 2.0 Rich Authorization Requests.

- RFC 7523: JSON Web Token (JWT) Profile for OAuth 2.0 Client Authentication and Authorization Grants.

- IETF OAuth Working Group mailing list archive.

Standards bodies and industry research

- NIST AI Agent Standards Initiative, Center for AI Standards and Innovation, February 2026.

- NCCoE concept paper: Accelerating the Adoption of Software and AI Agent Identity and Authorization.

- Kundan Kolhe, "Control the Chain, Secure the System: Fixing AI Agent Delegation", Cloud Security Alliance / Okta, March 2026.

Industry products and announcements